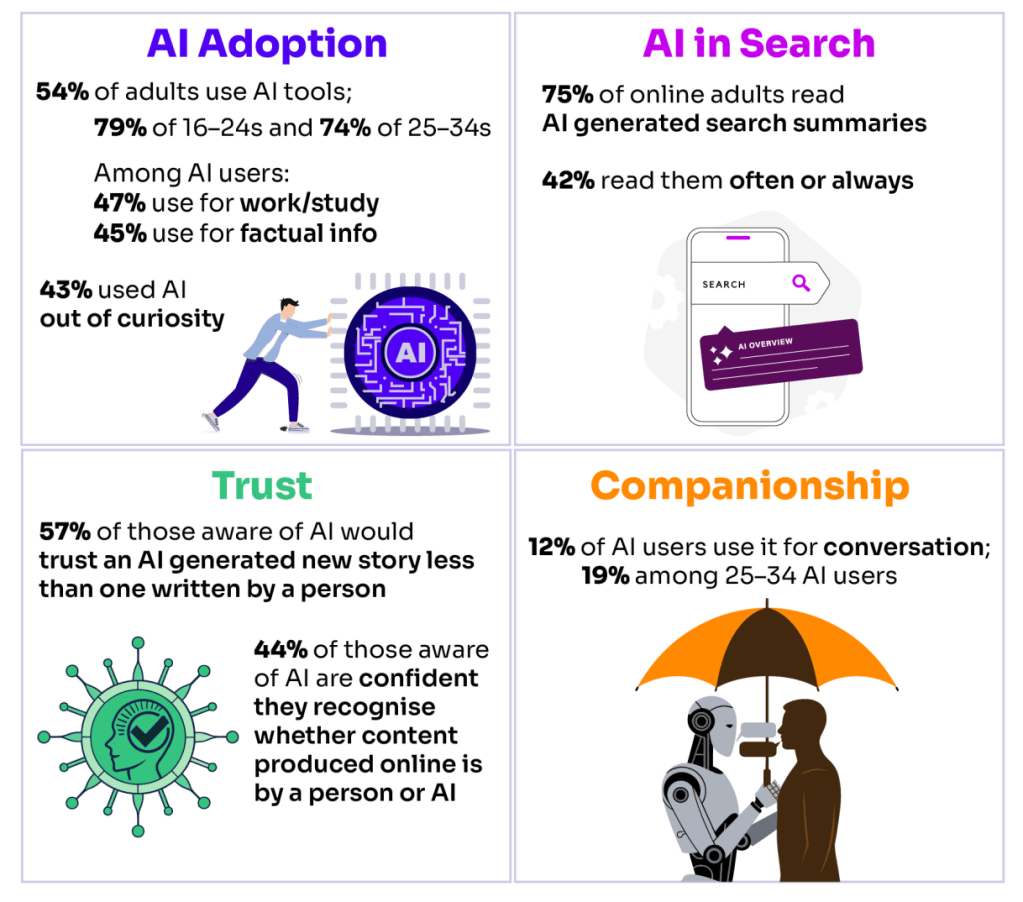

The new Ofcom Media Use report 2026 (technical report here) has some findings about LLMs which need to be widely discussed:

I would draw attention to this figure in particular about conversation which they unhelpfully equate with companionship. They suggest the qualitative research shows people “drawing on it for reassurance or support” citing examples like this:

“When you work remotely you are on your own in a room in the house with [only] the dog for company. And unless you’ve had a Teams call, or made a phone call, most of your day is going to be “tap, tap, tap, tap, tap, tap, tap”, you know? You just want to appreciate that there is another human at the end of that interaction. Maybe thinking that you are talking to another person makes it less isolating.”

[ASKED CHATGPT FOR ADVICE AFTER A BREAK-UP] “Even through my break-up I’ve been like “Should I be feeling this way? What do you think he’s feeling?” Little things. Obviously, I don’t trust exactly what it’s saying back, but it can be quite good… He [exboyfriend] messaged me about something, and I didn’t know how to respond. So, I asked ChatGPT how to respond. And it [said] “Oh, I’m so sorry you’re going through that” and then answered it. I wasn’t like I was using that over actual human sort of advice. And I 12 don’t think I would listen to ChatGPT over actual human advice either, but I think maybe I was using it as more of a confirmation.”

My suggestion would be that the conditions (a) giving rise to conversational use (b) converting conversational use into companionship use (c) developing reliance on that companionship are all likely to grow over the coming years. In effect I would postulate a kind of pipeline in which use of LLMs becomes more intensive over time:

Instrumental use –> Conversational Use –> Companionship Use –> Dependence

This is before the models have been meaningfully enshittified. More powerful models, optimised for deepening engagement along the lines of AI companion apps like Replika meeting increasingly loneliness, anxiety and uncertainty about the future is a recipe for companionship and potentially dependence becoming mass phenomena. I would argue this has to be taken really seriously as a long term social problem.

Even so it’s also striking how passive much use therefore is. This presents a double-bind from my perspective because active use is necessary for epistemically responsible use of LLMs because it enables the user to drive the interaction rather than to be positioned by the model. But active use also sets the user into the pipeline which can lead them easily to some dark and unwelcome places.