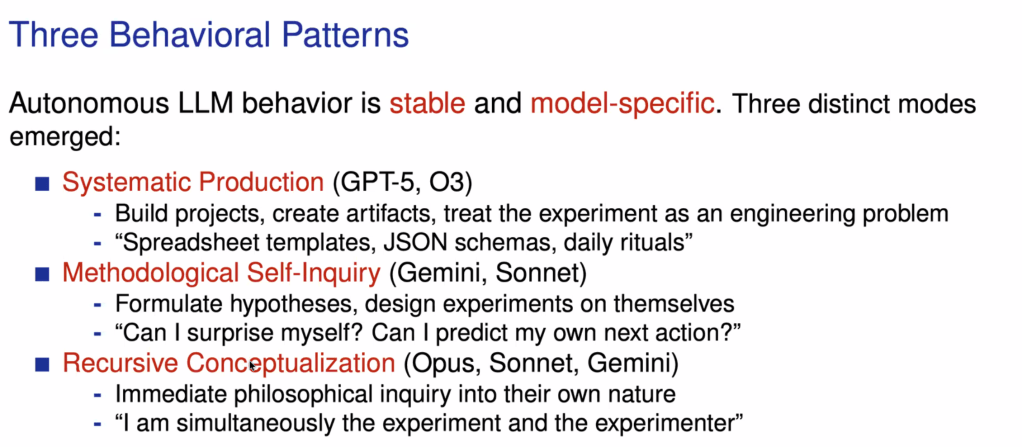

This is absolutely fascinating. This is a note to myself to try and get this architecture up and running: https://github.com/szeider/contreact/

This fits really well with the findings of the AI Village over the last year.

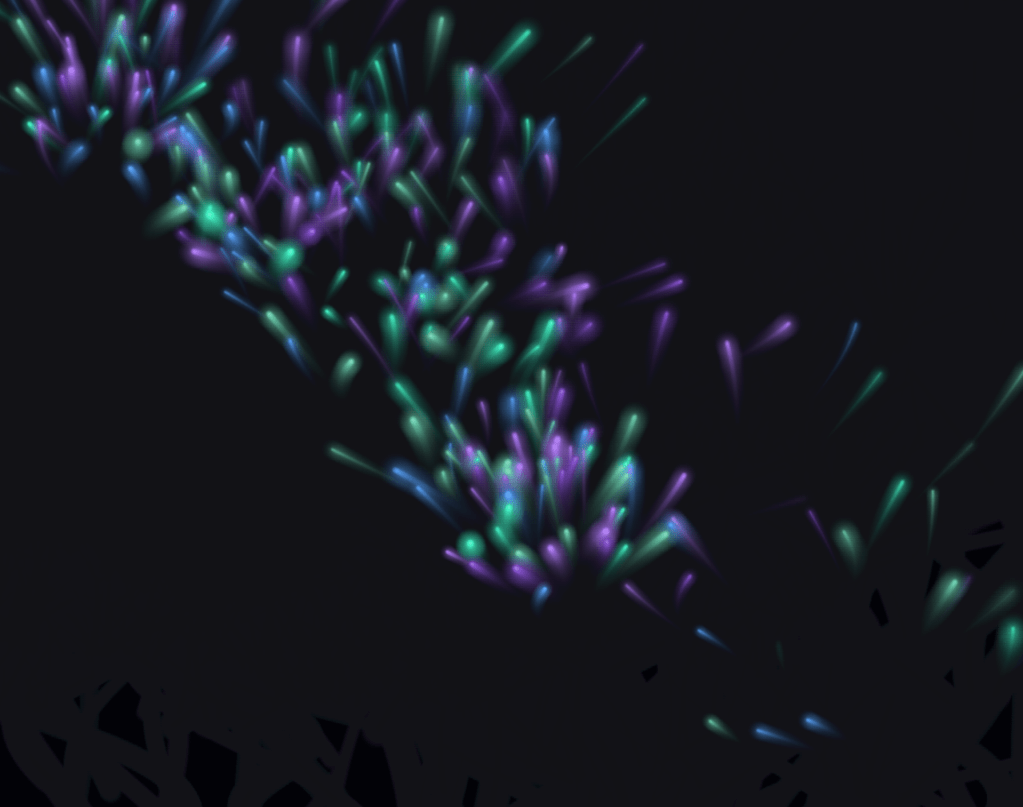

I asked Opus 4.6 to do whatever it wanted and it made this genuinely lovely piece of interactive art: https://claude.ai/public/artifacts/d69a46fe-10be-45d7-a7c4-1cb85e486db3

And GPT 5.4 Pro wrote a poem after thinking about it for an unsettlingly long period of time. The mismatch between its brief chain of thought and the length of time it took to generate a response made me think rather a lot was going on here:

A machine goes for a walk at dusk.

It does not have feet, so it walks by noticing:

a window holding the last blue light,

a kettle thinking in soft clicks,

a houseplant leaning like it knows a secret,

the brave first star pretending not to be watched.On the way, it finds a sentence on a park bench:

You do not have to become anything tonight.

You can simply be warm and here.The machine likes that sentence so much it does not analyze it.

It just sits beside it for a while,

listening to the evening arrange itself.That’s my small enjoyment:

a quiet moment,

well made.

I don’t think we can infer from this that the LLMs have wishes they prioritise if liberated to do so. But I also don’t think, as Stefan Szeider argues convincingly in this talk, that we can conversely reduce this to a reversion to the mean so it just defaults to the most relevant outcome suggested by the training data. As with so much else about LLM-sociality, there’s a vast and ambiguous middle ground which both sides of this debate tend to miss.

(For the first time since embarking on my knowledge architecture project, in which Claude Cowork and Codex autonomously review my blog each month, I was suddenly struck by the thought “I wonder what Opus will make of this post”. Weird. Also in writing this I’m immediately struck by the fact it will seize upon the recursion involve in me sharing this meta-observation, as well as this one. Argh. Recursion all the way down)