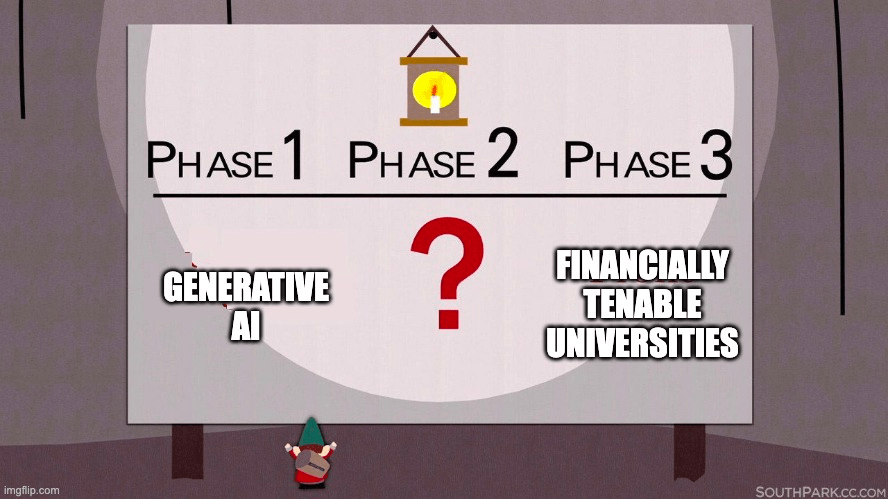

I’m increasingly preoccupied by the prospect a radical wave of automation will sweep universities over the coming years. The political deadlock over university funding in the UK means there is little prospect of a radical shift in the amount of public money entering the system, leaving institutions trapped between a reliance on international PGT students (increasingly a target of rising nativist sentiment), the declining real value of student fees, an increased cost of borrowing and the full range of inflationary pressures which have been impacting every organisation. These produce a vested interest in stridency by management in industrial disputes but in the absence of at least some concession the dispute will keep recurring, in terms of pension contributions and pay & conditions. There’s a real possibility continuous industrial upheaval and/or intensification of labour (i.e. asking staff to do more with less) will damage the UK’s attractiveness for international students, undermining the one part of the system which is currently working on a narrowly financial level. Under these conditions automation will inevitably be seized upon as a possible means to square the financial circle by in some ambiguous sense increasing productivity.

There are two intuitions about automation through artificial intelligence which are in widespread circulation at the moment. The first is that AI will replace existing jobs in the long heralded rise of the robots, the second that it will ‘free up’ workers to focus on more creative aspects of their roles. There’s no inherent contradiction between these intuitions in the sense that some workers might be replaced, while others are freed up.

It is easy for securely placed academics to imagine their own work in these terms. To the extent your work is organised in what Emmanuel Lazega calls a collegial mode there is a substantial degree of autonomy you exercise over your (predominately non-routine) activity. Furthermore there is a tendency towards what Lambros Fatsis and I described as a forgetfulness of technics i.e. a general disinterest in the reliance upon infrastructure which leaves relatively autonomous labour hooked into a complex organisation. This manifests itself in a lack of prescription about the tools you use and the steps you take to produce an outcome, even if the outcomes themselves are highly regulated. Obviously there are limitations to this, such as the governance of research ethics, but even this has important collegial aspects to it in the sense of learned societies formulating standards which researchers engage as moral agents, in contrast to the much more anormative regulation which comes from their institutions. It’s much less easy for insecurely employed academics (or indeed those who have permanent jobs but are low in the hierarchy) to readily imagine that structural changes in the organisation of labour which inevitably lead to a ‘freeing up’ in order that they can focus on the most creatively fulfilling parts of their jobs. In fact one you recognise these divergent positions can exist within a single organisation, it opens up complex questions about the relationality between them; if professors can claim the autonomy which generative AI affords then it raises the possibility that more routine jobs might cascade downwards, much as ‘slow professors’ too often need fast postdocs and junior lecturers to keep their responsibilities trundling onwards.

This paper by Markus Furendal & Karim Jebari explores the ambiguous character of the claims made about human-computer collaboration. They suggest this can work to either augment human capabilities or stunt human capabilities; both cases involve increases in human capability but the latter “prevents workers from attaining the goods of work while also bringing about significant bads of work”. Automation in this sense is a process in which “machines increase labor productivity, i.e., the value of produced goods and services per work hour, by performing some or all tasks that were previously done by humans”. It’s interesting this distinction excludes cases where the execution of a task is switched to a third party, which they note can increase profitability but is not a contribution to productivity i.e. the same task is still being done by someone. I suspect this kind of shadow work is going to grow rapidly within higher education, with academics and students being expected to undertake tasks which were formerly mediated by or entirely conducted by professional services staff. There will likely be a veneer of inclusive personalisation through chat bot interfaces but the economic logic of such developments seem clear, even if they don’t constitute a contribution to productivity as such.

This is how they outline the two scenarios:

The labor replacing scenario implies that we primarily substitute machines for human labor, rendering human workers redundant, as, for example, elevator operators became redundant by improvements in elevator safety. This would be undesirable, it is argued, as technological unemployment might cause popular discontent. In turn, it might also lead to political and social obstructions of technological progress (Gallego & Kurer, 2022).Footnote3 By contrast, the labor enabling scenario implies that machines primarily make human workers more productive, by creating the kind of centaurs mentioned above. For example, office workers have become more productive due to word processing software (at least with regards to the quantity of written output) and stand to become even more productive in the near future as generative AI technologies, such as chatbots, are integrated into the same software.Footnote4 Similarly, since machines are well suited to perform dull and repetitive tasks, AI automation could make both manual and office workers more productive and potentially more satisfied.

https://link.springer.com/article/10.1007/s13347-023-00631-w

Their attempt to get beyond a simple distinction between the undesirable labor replacement scenario and the desirable labour enabling scenario is extremely relevant. They suggest that a focus on aggregate output often ignores the question of “how they impact the quality and character of individual jobs”, including “the way in which the burdens and benefits are distributed within and across groups”. Their human augmentation and human stunting provides an analytical framework through which we can make sense of the micro-social and meso-social outcomes of a process that might look similar at the macro-social level of productivity.

A further distinction is needed, between two ways in which labor-enabling technologies can increase productivity: human augmentation technologies which promote workers’ pursuit of a variety of objective goods primarily accessible through one’s work (see Scanlon, 1998 for a discussion on objective goods); and human stunting technologies, which conversely increase productivity in ways that make the job worse for the individual workers, either by harming workers in some way or by preventing the pursuit of the work-related objective goods.

This entails the claim that work can be more or less ‘good’ or ‘meaningful’. There are obviously “jobs that are dangerous and can result in bodily injury, mental illness, or decreased welfare of those who perform them”. They draw on Anca Gheaus and Lisa Herzog‘s account of four kinds of good which can be enjoyed through work, drawing attention to the manner in which the reliance on paid employment squeezes out the time to pursuit these goods outside of paid work:

- The pursuit of excellence through the development of one’s skills

- The possibility to make a social contribution

- The receipt of social recognition for one’s activity

- The experience of community in working life

In this sense the traditional role of the academic seems high in potential goods. But as Gheaus and Herzog note, the organisation of work to hinder the pursuit of these goods or fail to protect other goods (e.g. discretionary time) leads to the production of ‘bads’, albeit of a more subtle sort than the immediate possibilities of physical and mental injury discussed above. The modern role of the academic seems prone to bads in this sense, being organised in ways which frustrate the realisation of goods which are sometimes experienced but often tantalisingly just out of reach.