- Underlying the discursive explosion about ChatGPT is what feels to me like a peculiar enthusiasm for disruption. In making this speculative suggestion I’ve not questioning the implications of the technology, only drawing attention to what might be going on when we talk about those implications. It feels similar to the epochal discourse which has surrounded other technologies in the past (e.g. ‘big data’) in the sense of proclaiming that we have entered a new world with new rules. However in this case gloomy expectations have replaced enthusiastic claims in spite of the shared assumption of inexorable technological change. Is there a certain pleasure to be found in assuming that existing assessment practices will be swept away in a tide of change? Have we been longing for a force beyond the university to come and undermine the often stifling bureaucracy which surrounds teaching and learning?

- The reason I’m not doubting the significance of this technology is because Microsoft plans to incorporate it into Office and Bing following their $1 billion investment in OpenAI in 2019 (and allegedly another $2 billion since then). It was trained on Azure cloud infrastructure and this indicates a longer term collaboration which builds on the existing autosuggestion features which many people, including myself, have used for years in products like Gmail and Outlook. If Microsoft’s intended $10 billion investment comes to pass then this suggests the possibility of an arms race as other cloud providers rush to compete with Microsoft’s privileged relationship with a company it will own 49% of (with the non-profit parent of Open AI owning 2% leaving 49% for other investors). If this is rolled out effectively enough that it becomes a routine feature of office software rather than a novelty, it creates the incentives for other firms to immediately try and match and exceed it. Google are already planning to incorporate their large language model into their search product.

- The costs involved in training comparable models will mean this is something which only the biggest tech firms can support. With each iteration “the inputs in data, energy, and money grow exponentially” and rely upon world class supercomputers. Presumably there is also a path dependency here where progression through subsequent iterations of large language models are necessary, as opposed to being able to start from scratch at something akin to GPT-4 which is coming this year with 100 trillion parameters in comparison to GPT-3’s 175 billion. If I’m correct about this (and please let me know if I’m not!) it means that firms would have to follow each other through these development trajectories in order to remain competitive rather than being able to catch up with a single act of investment. If this is a medium to long term process then it means firms with uncertain future prospects (which I increasingly think is the case for Meta for example) might end up dropping out in a Darwinian battle for AI supremacy over years and decades.

- Even if ChatGPT has received the most attention, we need to remember that text interaction doesn’t exhaust the limits of large language models. Microsoft’s VALL-E can replicate voice patterns based on a three second clip. DALL-E generates images based on natural language prompts. There are a range of text to video generators which could have astonishing implications once there are more well developed consumer and business facing applications. There’s a developing database of GPT uses here. As other large language models start to become operative within the social world, we can expect a degree of differentiation based on market segmentation (e.g. low/high end consumer/business etc), resource intensivity (e.g. casual consumer uses as opposed to, say, particle physics) and perhaps the underlying capacities of the models if there are even small differences between them.

- If these technologies will be ubiquitous in a matter of years then we need to support students in understanding and using them. Simply prohibiting them because they’re disruptive to existing assessment practices is pretty useless from the student point of view if they are going to be routinely using these technologies as part of their working life. The novelty which surrounds them means there is a lack of cultural scaffolding about how they are used, for what purposes and what meanings these different uses have. This means interrogating the epistemological and normative basis of their use, which Horning compares to the process through which Wikipedia became normalised on the assumption that most contributors tend toward be well-intention and erroneous information will tend to be corrected over time. It means reaching some viable conclusions about how to distinguish between better or worse applications of generative AI in different domains of creativity activity, as well as how to explore these together with students ideally in the context of reformed summative and formative assessment. How clear are we currently able to be about the learning outcomes we would want to promote under conditions where generative AI is ubiquitous? Ted Underwood suggests we should be thinking about the world students will inhabit in the 2030s where students might be using these tools as research assistants and editors.

- To the extent that what David Graeber called ‘bullshit jobs’ rest upon the transformation of existing information (i.e. moving information around, reframing information in routine ways) or the creation of external facing outputs in routine ways (e.g. writing press releases, content marketing, descriptive news reporting) there are reasons to be concerned that this could in the medium to long term lead to a massive loss of white collar jobs. This would compound existing pressures in an increasingly hybridised working environment which will tend to increase competition internationally between white collar workers. This is the working environment which our students will be entering and we need to find out how to prepare them for it. If this turns out to be the case then what implications does this have for the training of future models and their application in specific domains?

- Generative AI calls into question the status of description in the most global sense. As Horning puts it, it facilitates “some sort of expression of the social average without my having to directly interact with any actual people or see myself intersubjectively constituting any part of that social average”. There’s a fairly radical implication for how we conceive of the socio-cultural domain if this framing is accurate (which I think it is) as well as more broadly how we imagine the reliable reiteration of establish descriptive facts as a marker of knowledge and expertise. If this is a faculty available to anyone with a computer (or at least the right software) then alternative markers of knowledge and expertise will become more important e.g. evaluation of novelty, improvisation, creative expression, aesthetic particularism. I wonder also if building ‘the social average’ into the socio-technical infrastructure of everyday life will be a counterweight to the post-factuality which social media has given rise to. Or will it generate new layers of conspiracy and paranoia?

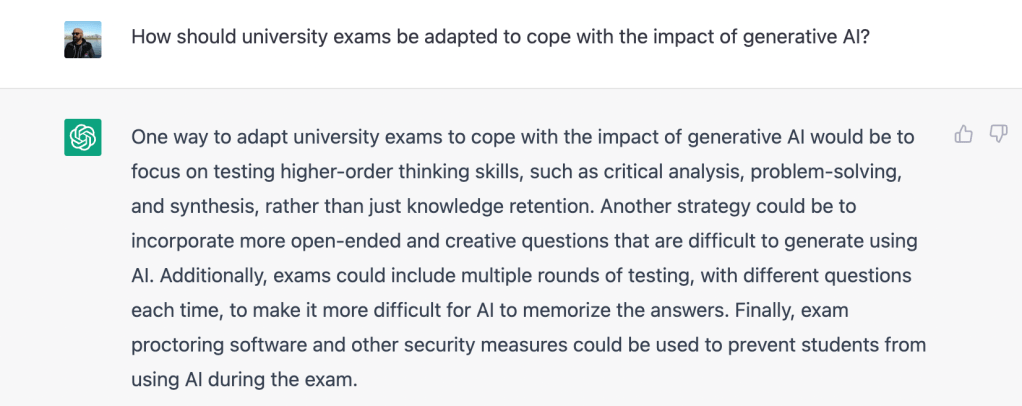

- To the extent assessments rely on the reiteration of facts from within a domain of knowledge in a way deemed suitably authoritative, they will be undermined by generative AI. Obviously one solution is to monitor and control more intensively e.g. proctoring software which blocks Chat GPT, moving to in person exams, using detection systems like GPTZero. But I’m increasingly convinced (and thanks to my colleague Drew Whitworth who got me thinking seriously about this) that we instead need to move towards assessments which encourage those markers of knowledge and expertise which students will actually be subjected to in a world where generative AI is ubiquitous. I’m delighted to find that ChatGPT agrees with me here:

A few more thoughts which were swirling around in my mind as I’ve been thinking this through:

- The comparison between ChatGPT and Wikipedia as an apt one. In common with many others, I suggest to students that Wikipedia should be a starting point rather than ending point because there are weaknesses built into the model which mean we cannot assume its reliability. Likewise with ChatGPT the ‘hallucination rate’ is estimated to be between 15% and 21%, an improvement from its predecessors 20% to 41% but still worryingly high. The discourse of inexorability that surrounds generative AI (see point 1) risks creating a climate in which this tendency to make stuff up is erased or relegated to the status of contingent error. Up voting and down voting is a form of feedback which establishes user satisfaction rather than factual accuracy. In fact if generative AI comes to increasingly define the domain of factuality than how will it be fact-checked? Will the social average come to substitute for legitimated consensus? In this sense it will radicalise the post-truth tendency of social media including feeding back into this dynamics in accelerating fashion as the cultural outputs of generative AI circulate through the attention economies of social platforms.

- However a focus on the mediocrity of much of ChatGPT’s output is seemingly oblivious to the mediocrity of much cultural output which doesn’t rely on generative AI. Relying on more or less routine procedures which trends towards the social average isn’t a productive process which was invented by generative AI, it merely formalises and accelerates a tendency which was already present in the bureaucratisation of cultural production. There was already an infoglut in which we are drowning in cultural abundance (a sharp transition from the position of relative scarcity which characterised my education in the 90s and much of the 00s) which is going to be entrenched by the acceleration of production which generative AI will give rise to. The drivers of cultural production are still in place, with generative AI simply making it easier to produce more and quicker.

- If a new winter of tech investment is beginning when firms are reducing staffing number, will they be ready for an influx of misinformation and propaganda driven by generative AI? The ease with which text-to-video could facilitate engagement through a platform like TikTok is really quite terrifying and inherently difficult to recognise for anyone other than the platform operators. I think the early fixation on ‘deep fakes’ as strategic interventions obscures the possibility that we might just be overwhelmed by a torrent of shit which renders the internet unusable. Like social media but more so, as Neal Stephenson explored in Fall, or Dodge in Hell (or was it the prequel to this, I can’t remember, I’ll need to ask ChatGPT).

- I’ve written a few times in this blog about generative AI becoming ubiquitous. But its effective use in specialised contexts will likely depend on domain specific implementations will be constrained by the competitive dynamics of the generative AI arms race. It will be in the interests of big tech end users to lock down these models for commercial sensitivity but that will hinder the development of more particularistic applications focused on particular areas of social life or particular use cases. Even if these are developed by tech giants, they’ll be done at global scale and at a distance from practice on the ground, likely being poorer for it in terms of effectiveness for the end user.

- My experience of relying on autosuggestion in writing e-mails is that it necessitates a slightly different writing process. If you stop and think about every suggestion, it takes longer than if you just (touch) typed yourself. But if you lean into the tendency it is highlighting in the writing, tapping tab when it is correctly identified and continuing to type when it’s not, it functions more like a ghostly aid who can be easily dismissed. This leaves me wondering about what habituated reliance on more complicated forms of generative AI will look and feel like in practice. There’s a lot of reflexivity needed here about creative processes, workflows, practical implications and I wonder where the spaces will be in which these dialogues can take place.

- If we reject the knee jerk option of banning GPT then we’re immediately faced with the challenge of how to use it in assessments. Nancy Gleason cautions against using it in all assessments: “We can teach students that there is a time, place and a way to use GPT3 and other AI writing tools. It depends on the learning objectives”. She suggests that its importance that academics look at what ChatGPT outputs look like within their domain in order to understand the similarities and differences to what they would already expect from students. If writing with AI changes the nature of the writing process then, she points out, new rubrics and assignment descriptions will be needed.

- Anna Mills suggests modelling and critiquing outputs with students as part of a broader push for critical AI literacy with students. I really like this suggestion as a practical way of incorporate ChatGPT into the classroom and supporting students in recognising the constraints and mistakes which generative AI is prone to, at least in its current incarnations. Paul Fyfe has been assigning students the challenge of ‘cheating’ on their assignments using text generating software.

- The scholars participating in this discussion are often highly experienced writers who have been doing this as part of their job for decades. Are they making scholastic assumptions about how students will relate to generative AI? Is the experience and used of a writer likely to be extremely different to that of a student? What happens to the next generation of writers (and the social average upon which generative AI depends) if the process of learning to write is stalled by an overly hasty embrace of ChatGPT? Sarah Levine has the uplifting suggestion that it might in fact help students to become “fluent enough writers to use the process of writing as a way to discover and clarify their ideas” because it allows “students to read, reflect, and revise many times without the anguish or frustration that such processes often invoke”. She also makes the interesting point that generative AI will expand the repertoires of examples which teachers can use in the classroom.

- These points highlight the need to understand how students are using generative AI as part of the writing process (empirical question) and for these findings to inform debates about how they should use it (normative question). Is it used a research assistant and editor (Ted Underwood) or a substitute for creative engagement? If students are going to be using it anyway, particularly after it has been incorporated into Office, moving them towards the former pattern and away from the latter seems imperative. As Victor Lee puts it, we need to “use AI for humans rather than in place of human”.

- Chris Lemons observes that generative AI could be used for inclusion by developing smart tutoring which is responsive to student needs.

So what do we do in the near term?

- Not overreact on the basis of techno-hype

- Warn students about (existing) limitations

- Find ways to detect and mitigate malpractice

- Experiment with incorporation into teaching/assessment

- Explore longer term implications with students

- Begin to rethink pedagogy and assessment

I’ve not watched these yet but I’ve seen Charles Knight make some interesting comments about this on LinkedIn. The video below is one of a series which I intend to watch as I go further into this topic: